Beyond Just Seeing: Why Your Devices are Finally Learning to “Understand” the World

Imagine pointing your phone at a cluttered construction site. Instead of just identifying “concrete, metal, hard hat,” it tells you, “The safety barrier is misplaced, creating a trip hazard near the active zone.”

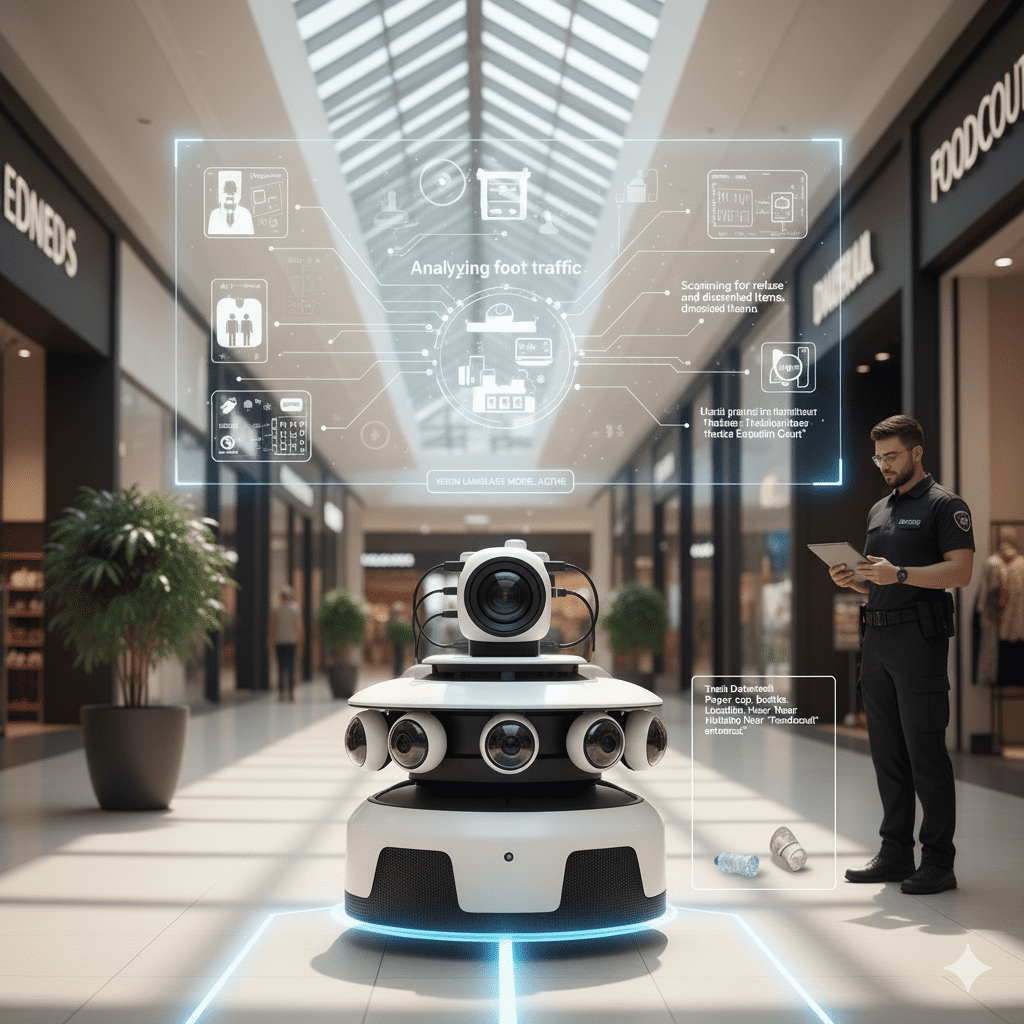

This leap from simple identification to real-world understanding is driven by Visual Language Models (VLMs), the next big breakthrough in AI.

For years, Computer Vision was limited. Older models could see objects—a cat, a car, a cup—but couldn’t grasp the context, meaning, or relationship between them. They were like having a dictionary without the ability to form a complex, nuanced sentence.

VLMs change this. By seamlessly integrating seeing (computer vision) with language (large language models), they build a rich, conceptual worldview. They don’t just know what things are; they understand what is happening and why it matters.

Context-Aware Intelligence

VLMs can reason about the visual scene:

Beyond Labels: They identify “a vintage red sports car speeding down a coastal highway,” not just “car.”

Interactive Understanding: You can ask questions about an image—”Is this machine overheating?”—and get descriptive, practical answers.

Real-World Reasoning: They apply common sense, making technology more reliable and intuitive.

This capability is transforming industries. In construction, manufacturing, and logistics, our CARRYAI VLM exemplifies this shift. It delivers Real-Time Site Intelligence—a customizable machine brain that instantly turns ‘Seeing’ into ‘Knowing’ by generating audit-ready reports tailored to your specific needs.

We are now entering an exciting new chapter where technology moves past merely seeing pixels to achieving genuine, intelligent visual comprehension.